The scientific community is abuzz with the potential of hypothesis generation engines powered by literature-based knowledge graphs. These innovative systems are redefining how researchers approach discovery, leveraging vast repositories of published knowledge to surface connections that might elude even the most brilliant human minds. At the intersection of artificial intelligence, data mining, and domain expertise lies a transformative tool that could accelerate breakthroughs across every field of study.

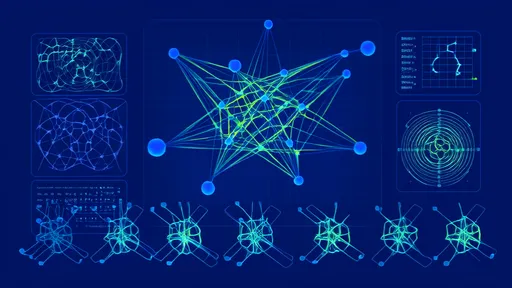

Knowledge graphs have emerged as the backbone of this new paradigm, structuring millions of research papers, clinical trials, and experimental results into interconnected networks of concepts. Unlike traditional literature reviews that rely on keyword searches, these dynamic representations capture relationships between entities—genes interacting with diseases, chemicals inhibiting biological pathways, or theoretical frameworks contradicting empirical evidence. The true power emerges when machine learning algorithms traverse these networks to propose novel hypotheses worth testing.

Recent advances in natural language processing have enabled the extraction of nuanced scientific relationships from unstructured text. Where earlier systems might have simply counted co-occurrences of terms, contemporary models can discern whether a paper reports activation, inhibition, or correlation between variables. This semantic understanding allows hypothesis engines to distinguish between established knowledge and frontier speculation, weighting connections appropriately when suggesting new research directions.

The most sophisticated platforms now incorporate temporal dimensions, recognizing that scientific credibility evolves. A controversial claim from a 1990s single-study paper carries different weight than a mechanism replicated across twenty recent publications. By modeling how consensus forms and shifts, these systems can identify "knowledge gaps"—areas where available evidence neither strongly supports nor refutes potential relationships, marking prime territory for investigation.

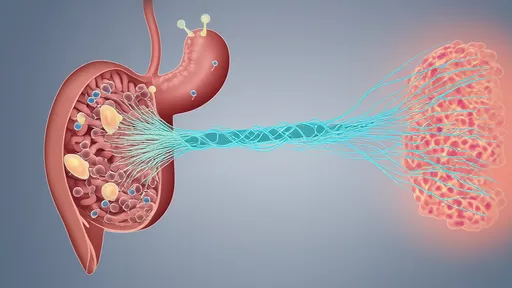

In biomedicine, such engines have proposed unexpected drug repurposing opportunities by connecting compounds to diseases through shared protein targets or metabolic pathways. One notable case identified an anti-inflammatory medication as a candidate for neurodegenerative disease prevention by recognizing parallels in cellular stress responses across seemingly unrelated conditions. These non-obvious suggestions often combine findings from specialties that rarely cross-pollinate in traditional research.

Theoretical physics has similarly benefited from computational hypothesis generation. Knowledge graphs encompassing mathematical formulations across subfields have helped surface potential unifications between quantum mechanics and gravity theories by detecting structural similarities in equations that human theorists had approached from different perspectives. The systems don't prove connections but highlight avenues where focused human creativity might yield breakthroughs.

Critics caution that these tools risk perpetuating biases in the scientific literature or generating plausible-but-erroneous suggestions. A hypothesis engine trained predominantly on Western journal articles might overlook traditional medicine insights, while statistical patterns could mistake correlated phenomena for causal relationships. Leading developers now implement safeguards including uncertainty quantification, alternative interpretation displays, and explicit marking of inferential leaps in the reasoning chain.

Ethical considerations loom large as these technologies mature. Should an AI-generated hypothesis be patentable? How do we attribute credit when a computer suggests a research direction that leads to a Nobel-caliber discovery? The research community grapples with these questions even as the tools demonstrate tangible value in pilot programs. Some journals now require disclosure of computational hypothesis generation in methods sections, treating the software as another instrument in the scientific toolkit.

Looking ahead, the integration of experimental data streams promises to create closed-loop systems where hypotheses are not only generated but rapidly tested through automated laboratories. Early prototypes connect knowledge graphs with robotic experiment platforms that can screen hundreds of material combinations or genetic edits, feeding results back to refine subsequent suggestions. This tight iteration cycle could compress years of traditional research into months or weeks.

The ultimate test for these systems lies not in their technical sophistication but in their ability to expand human understanding. The most successful implementations augment rather than replace researcher intuition—surfacing unexpected connections while leaving the profound work of theory-building and experimental design to scientists. As the tools grow more prevalent, they may redefine what it means to "stand on the shoulders of giants," giving researchers access not just to published conclusions but to the latent potential hidden in the relationships between them.

For all their promise, hypothesis generation engines remain works in progress. The scientific method's core principles—empirical validation, peer critique, reproducible results—apply equally to computer-suggested and human-conceived ideas. What's undeniable is that these systems are opening new vistas in the landscape of discovery, helping researchers navigate the exponentially growing scientific literature to focus their creativity where it matters most.

By /Jul 28, 2025

By /Aug 5, 2025

By /Jul 28, 2025

By /Jul 28, 2025

By /Aug 5, 2025

By /Jul 28, 2025

By /Aug 5, 2025

By /Aug 5, 2025

By /Jul 28, 2025

By /Jul 28, 2025

By /Aug 5, 2025

By /Aug 5, 2025

By /Jul 28, 2025

By /Aug 5, 2025

By /Aug 5, 2025

By /Jul 28, 2025

By /Aug 5, 2025

By /Aug 5, 2025

By /Jul 28, 2025

By /Jul 28, 2025